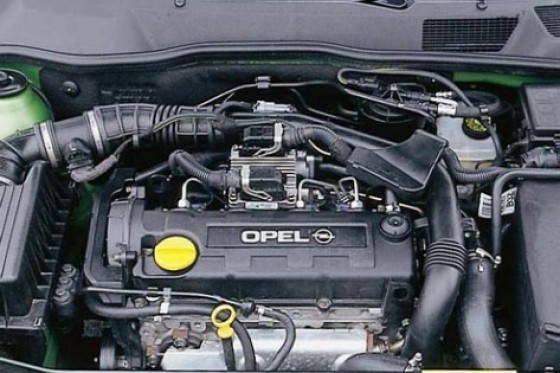

Przekaźnik świec żarowych - silniki Diesla 1.7 * 1.9 * 2.0 * 2.2 - 6235303 1237976 55354141 9132691 OPEL - GM Części do Opla - Sklep Internetowy Opel-Sklep.com

Przekaźnik świec żarowych sterownik czasu żarzenia opel astra combo corsa meriva signum sintra vectra zafira 1.7 1.9 2.0 2.2 oryginał gm 55354141 6235303 - przekaźniki świec żarowych / przekaźniki - Kolmot Auto Elektronika

Przekaźnik świec żarowych OPEL Astra G II 1.7 2.0 6235303 za 309 zł z Częstochowa - Allegro.pl - (7143976887)

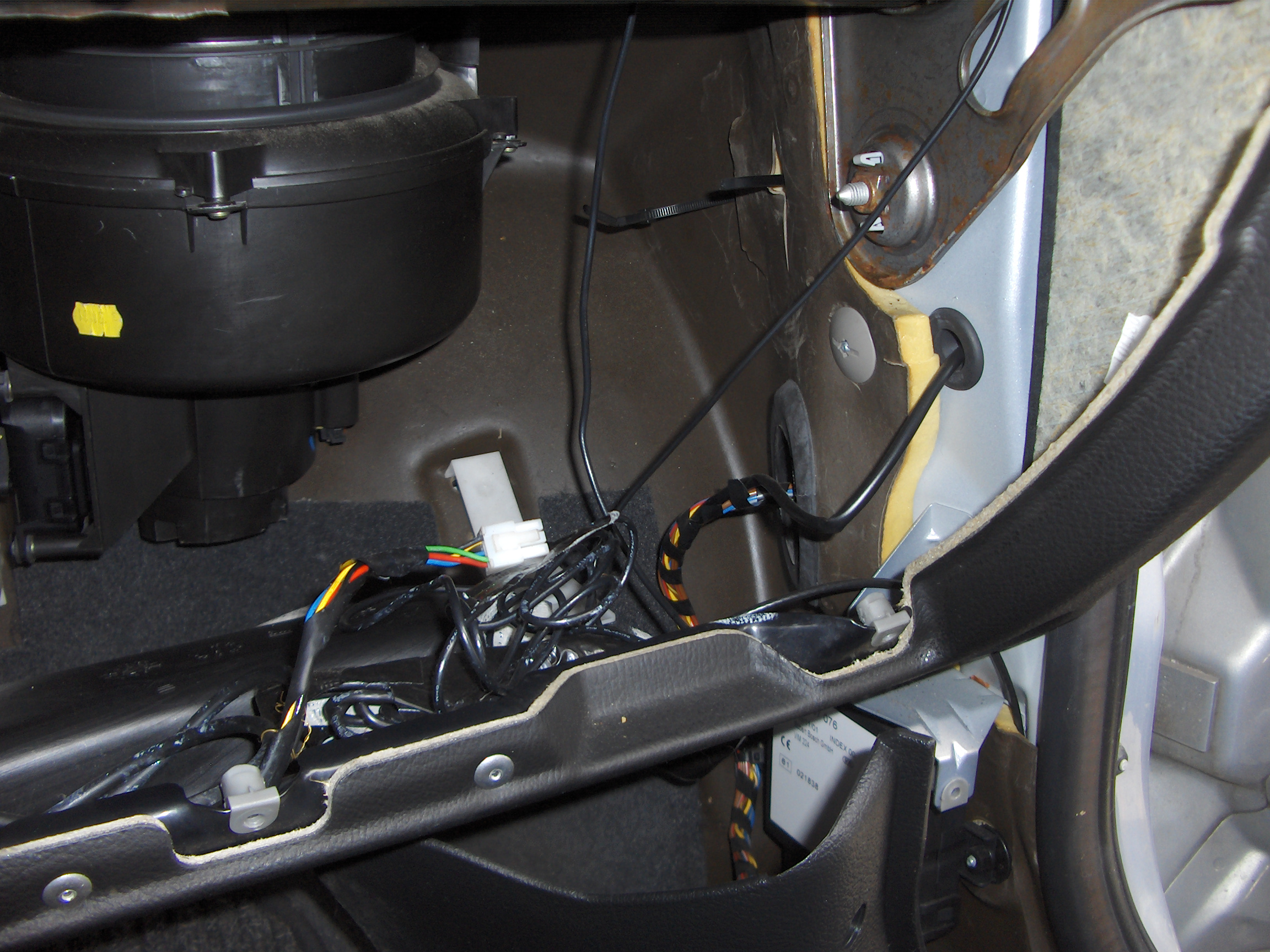

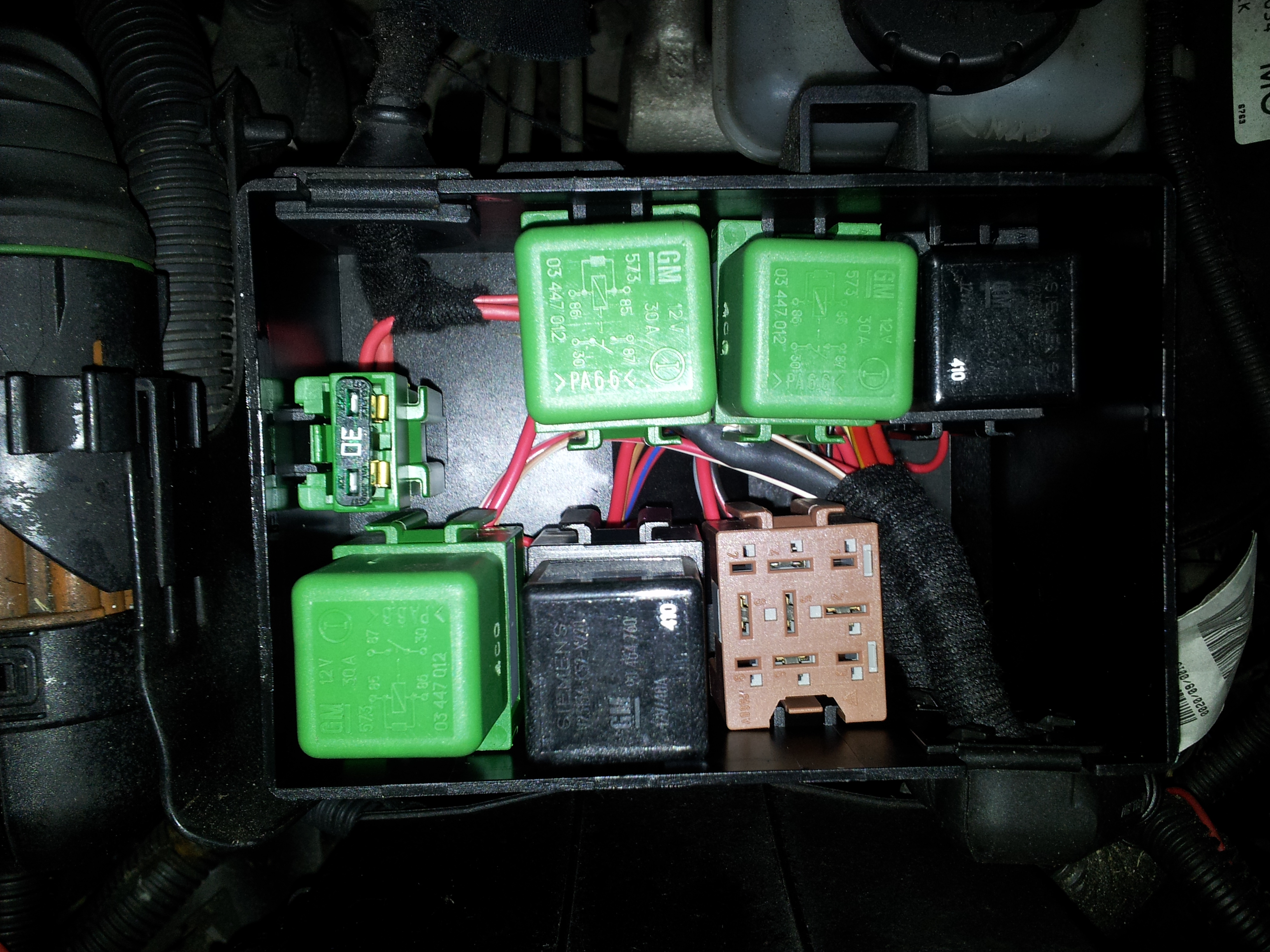

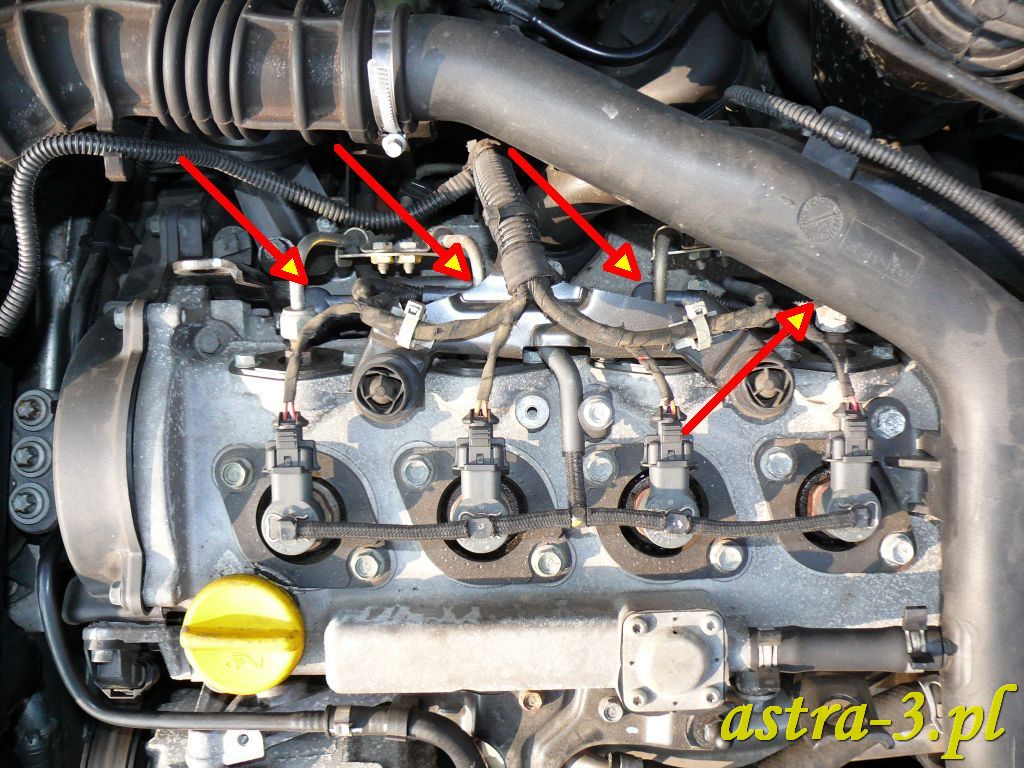

Sterownik świec żarowych- jakim sposobem sprawdzić - Opel Astra - Silnik Diesel - Forum OPEL24 - miejsce dla fanów czterech kółek